Member-only story

Are you still using the mean and median values to clean the blanks?

Mathematical foundations of blank filling

Working with missing values is a common task in machine learning. We can say that it’s the very first task to accomplish before starting a pre-processing pipeline. The most common approach to blank filling is to use the mean and the median values. Although this is a very common practice, maybe there’s a more data-driven approach we can use.

The need for blank filling

Machine learning models are mathematical formulas (or rely on mathematical formulas), so they need to work with numbers. When our data is missing, there’s no number to learn from and the model can’t handle this situation by itself. It needs to see numbers everywhere in the dataset it works with, so we have to fill in the possible blanks in order to make the model work properly.

The origins of the blanks may be several. For example, data doesn’t exist in the database we extract it from. Or data may be corrupted or dirty or even manually created so that it may contain errors and missing values due to human mistakes.

All these issues rise the need to fill in the blanks and clean our data.

Mean or median? Maybe none of them

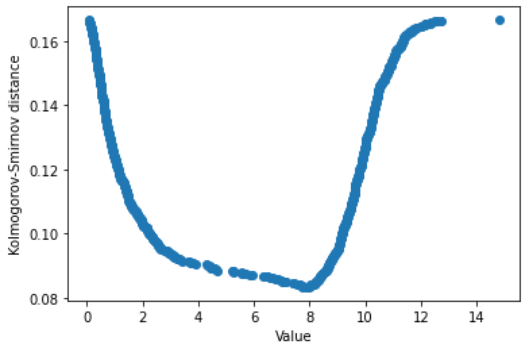

Numerical variables are often cleaned using the mean value or, if the variable is asymmetric, using the median value. It’s an empirical approach that is widely used in machine learning. But are we sure that a better value doesn’t exist?

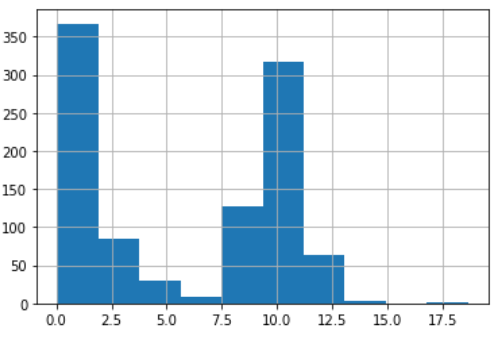

Let’s create a univariate dataset made by the mixture of two lognormal distributions and some missing values.

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

np.random.seed(0)

col = pd.Series(np.concatenate((np.random.lognormal(size=500) ,np.random.normal(size=500,loc=10), [np.nan]*200)))This is its histogram.

Now, it’s time to try the blank filling using the mean value and the median value. How to choose between them? My personal experience and…